Wed 7 Nov, 2012

What the election outcome says about election forecasting

Comments (4) Filed under: PoliticsTags: Barack Obama, Mitt Romney, Presidential election

[P]olitics is a field perfectly designed to foil precise projections. … You can’t tell what’s about to happen.

— David Brooks on the 2012 presidential election, New York Times, October 23

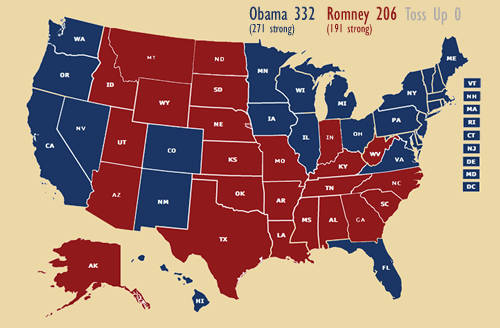

As of this writing, my state-by-state projection of the presidential election outcome is 49 for 49. If Florida, the only state I said was a close call, ends up in Obama’s column, that record will improve to 50 for 50.

This result says little about my own predictive abilities, however, and a great deal about the ability of political science to make meaningful predictions and to understand the fundamental factors driving presidential election outcomes.

My 2012 electoral college prediction, as posted here on the morning of the election

What quick lessons can we draw here?

First, it is possible to make predictions about election outcomes, especially about national elections in this era of rich state polling data. In fact, between basic economic indicators and properly-adjusted battleground-state polling data, we can actually have a reasonably high degree of confidence in the shape of election outcomes, before any ballots are cast.

Second, political pundits and the media were simply making up storylines such as “momentum,” a tied race and “razor-thin” margins.

It’s true that Romney received a significant bump in the polls after the first presidential debate, but far from beginning an upward trend for him, the polling data immediately began to settle slowly back to where it had been. There was simply never the slightest evidence for “momentum,” and aside from perhaps that moment after the debate, no hint that the race was particularly close.

In fact, media narratives of significant change over time or uncertainty in the presidential race were never really true. Media coverage of individual poll results, with suggestions each time that there had been a change in the race or that no one knew what was going on, were meaningless. Here, David Brooks was right in calling on himself to stop looking at each individual poll, and to focus on only poll averages.

A more nuanced story here would focus on how seriously to take polls generated with different assumptions about the demographics of the voting public. Models like Nate Silver’s take seriously issues like cell phone usage and adjustments for partisan affiliation, and assign different weights to different polls as a result.

The lesson here? Never pay any attention whatsoever to individual poll results, but only to aggregated, weighted poll numbers—or to analysts who are willing to use them.

Finally, it’s always, first and foremost, about the fundamentals.

What do I mean by this? That most of a presidential election outcome can be predicted with a handful of basic economic indicators, leaving very little room for such factors as candidate personalities, ideological differences, campaign strategies, or even the national mood.

As a result, I think most political scientists are inclined to view predictions like mine as akin to cheating, since they rely heavily on up-to-date polling data to predict outcomes in close battleground states.

In June, by contrast, three political scientists—John Sides, Seth Hill, and Lynn Vavreck—offered a simple model based on nothing more than whether the incumbent was running, his approval rating and the percentage change in GDP per capita at that point in the year. This model predicted Obama as a strong favorite to win reelection.

Ezra Klein admitted yesterday that he just couldn’t believe that these simple indicators were more important, and better predictors of the November outcome, than how the candidates were polling in June, everything that would happen over the next five months, or even the generally weak state of the economy. Yet the political scientists told him to step back and see the forest instead of the trees, and they were right.

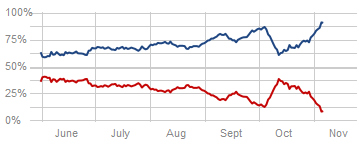

Because presidential races are mostly about fundamentals that can be seen well in advance, there is actually a remarkable consistency to presidential races. Here is how Nate Silver’s model estimated President Obama’s chances for reelection over the last few months:

Obama’s odds of winning the electoral college over time, as predicted by Nate Silver’s model

As you can see, this cake was baked long ago. President Obama began the summer with a respectable lead, and maintained that lead until the election. Yes, there were modest bumps along the way. This was partly because the economic fundamentals were improving, but also because not every percentage point in the vote can be entirely determined by GDP or employment figures; candidates and campaigns do matter, at least on the margins, and often temporarily.

So, for instance, Romney did receive an usually strong bump in the polls from the Denver presidential debate. Yet the polls soon began a slow, but steady, return to the levels they’d been maintaining all along. Hurricane Sandy, likewise, seems to have resulted in a bump for President Obama, but he was already trending up, slowly making up for the temporary, modest effect of the first debate. And if the election hadn’t been held for a while, it seems highly like that the effect of his performance after the hurricane, too, would have diminished and mostly disappeared over time.

In short, most factors during the campaign will matter little, and their impact will wane before long. The fundamentals, however, remain constant.

A final note

I understand that today, many in the pundit class have dropped their beliefs that Nate Silver’s analysis was wrong, and have turned to criticizing him, in hindsight, as having done nothing more than read and report the state polls. They’re wrong, of course, and the easiest indication of this is that most of them couldn’t read the polls in the same way that Silver did.

My own election prediction required little more than being an informed consumer of election data, and of models such as Silver’s. The only difficult call for me was Florida, and here I assumed that the upward impact of Hurricane Sandy on Obama’s approval rating would continue for a bit longer, and the economic fundamentals were returning him to a slightly higher level, than a model like Silver’s was set up to conclude.

Silver’s own work, however, while perhaps a relatively straightforward application of polling analysis and political science, was far from trivial. Constructing his model required careful assessment and balancing of a variety of factors and assumptions, and while this required mathematics no more advanced than linear regression, he was able to accomplish far more with his analysis than political punditry could.

DShirley says:

Part 1: While only a "political novice"…a few FACTS were revealed on election night in that this nation has a growing minority that is not in lock step with GOP philosophy (guns, immigration, job creation, economics (aka trickle down) and race (white majority fear of being a minority political block by restricting voting in minority areas))or "rhetoric". Besides, the last GOP administration is directly responsible for exacerbating the fiscal crisis and national debt, mainly by fighting 2 wars on a credit without engaging the public in a national effort, and enacting irresponsible tax breaks while increasing spending — mainly in defense by doling out contracts in the billions of dollars for 2 x long term occupations (I am a veteran of the Iraq war first hand and saw what our money bought!!).

DShirley says:

Part 2:What is also believed by Americans who voted for President Obama is that more wealth than ever before is being concentrated (already a proven fact) into the hands of a few sqeezing out the middle class, not a good recipe for a nation's economy that depends on consumer spending — the business class in this country is undermining the economy and the nation by putting too much emphasis on their own self preservation without a "country first" attitude and bad physche (something artificially created in themselves with the election of President Obama – for whatever illogical reason!).

DShirley says:

Part 3:Our nation is more urbanized than ever, so education and job creation is a must to satisfy that urban populace's desires and expectations about life. I say this because as a minority, resources are not engaged (often directed away from) our communities, and blacks/hispanics are left hyper-segregated in these jobless, declining tax base (gotta have a job to own a home and pay property taxes!!), educational resourced starved areas/communities and as a result social behavior is influenced by direct policy decisions of neglect and society is put on edge….often leading to white majority excuses that the money is not well spent because of bad minority culture! Racism is alive, passive, ugly, and destructive. I know, just visit Detroit — grew up there….I know first hand the impacts of what long term racism and hyper-segregation can do (to one's spirit, attitude, and culture — breeds hopelessness and destroys desire), especially when there are no jobs or quality education available for a whole generation of people.

DShirley says:

Part 4: Politics is also local. If an uninformed public (Detroit for example) elect leaders who are corrupt, the hole only gets bigger…..now Detroit has good leadership. However, one cannot continue to justify not engaging with a whole city on the fact of a few bad apples. As long as urban areas remain on the landscape and people are segregated by race and economics (which usally alligns with race – hint minorities), the GOP will not make inroads with their message (which is one of hate when the onion is peeled back). Those policies derive from an "idea", and that idea is to preserve what one feels as the "dominant" culture……can anyone explain what our dominant culture is anymore????